Oct 4th 2014

The Economist

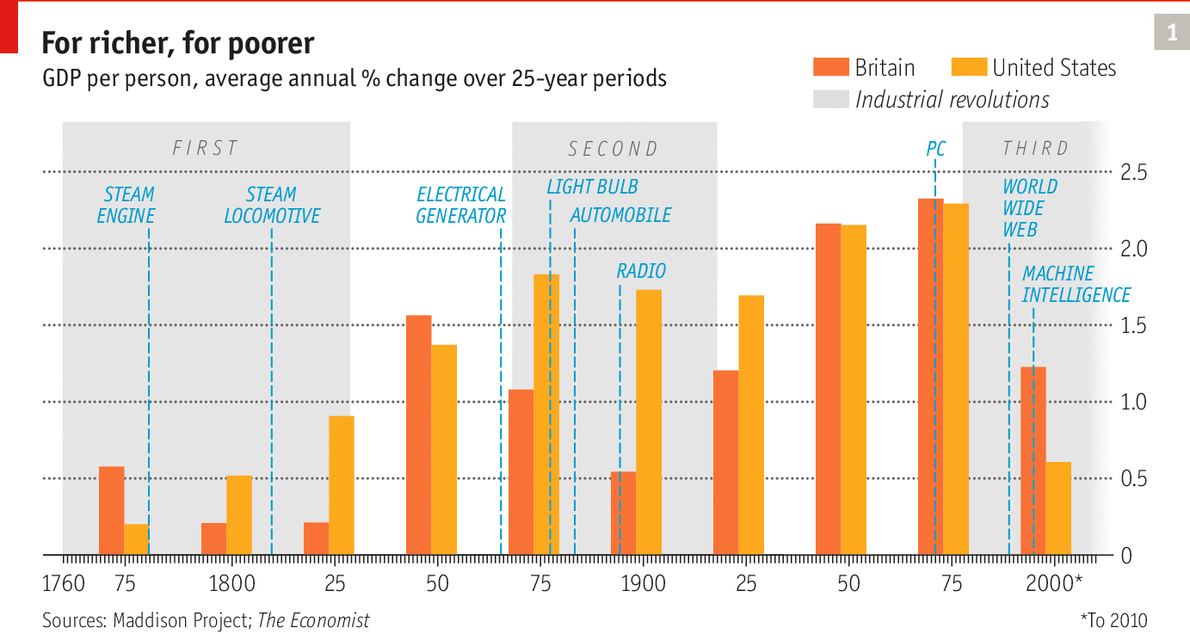

MOST PEOPLE ARE discomfited by radical change, and often for good reason. Both the first Industrial Revolution, starting in the late 18th century, and the second one, around 100 years later, had their victims who lost their jobs to Cartwright’s power loom and later to Edison’s electric lighting, Benz’s horseless carriage and countless other inventions that changed the world. But those inventions also immeasurably improved many people’s lives, sweeping away old economic structures and transforming society. They created new economic opportunity on a mass scale, with plenty of new work to replace the old.

A third great wave of invention and economic disruption, set off by advances in computing and information and communication technology (ICT) in the late 20th century, promises to deliver a similar mixture of social stress and economic transformation. It is driven by a handful of technologies—including machine intelligence, the ubiquitous web and advanced robotics—capable of delivering many remarkable innovations: unmanned vehicles; pilotless drones; machines that can instantly translate hundreds of languages; mobile technology that eliminates the distance between doctor and patient, teacher and student. Whether the digital revolution will bring mass job creation to make up for its mass job destruction remains to be seen.

Powerful, ubiquitous computing was made possible by the development of the integrated circuit in the 1950s. Under a rough rule of thumb known as Moore’s law (after Gordon Moore, one of the founders of Intel, a chipmaker), the number of transistors that could be squeezed onto a chip has been doubling every two years or so. This exponential growth has resulted in ever smaller, better and cheaper electronic devices. The smartphones now carried by consumers the world over have vastly more processing power than the supercomputers of the 1960s.

Moore’s law is now approaching the end of its working life. Transistors have become so small that shrinking them further is likely to push up their cost rather than reduce it. Yet commercially available computing power continues to get cheaper. Both Google and Amazon are slashing the price of cloud computing to customers. And firms are getting much better at making use of that computing power. In a book published in 2011, “Race Against the Machine”, Erik Brynjolfsson and Andrew McAfee cite an analysis suggesting that between 1988 and 2003 the effectiveness of computers increased 43m-fold. Better processors accounted for only a minor part of this improvement. The lion’s share came from more efficient algorithms.

The beneficial effects of this rise in computing power have been slow to come through. The reasons are often illustrated by a story about chessboards and rice. A man invents a new game, chess, and presents it to his king. The king likes it so much that he offers the inventor a reward of his choice. The man asks for one grain of rice for the first square of his chessboard, two for the second, four for the third and so on to 64. The king readily agrees, believing the request to be surprisingly modest. They start counting out the rice, and at first the amounts are tiny. But they keep doubling, and soon the next square already requires the output of a large ricefield. Not long afterwards the king has to concede defeat: even his vast riches are insufficient to provide a mountain of rice the size of Everest. Exponential growth, in other words, looks negligible until it suddenly becomes unmanageable.

Messrs Brynjolfsson and McAfee argue that progress in ICT has now brought humanity to the start of the second half of the chessboard. Computing problems that looked insoluble a few years ago have been cracked. In a book published in 2005 Frank Levy and Richard Murnane, two economists, described driving a car on a busy street as such a complex task that it could not possibly be mastered by a computer. Yet only a few years later Google unveiled a small fleet of driverless cars. Most manufacturers are now developing autonomous or near-autonomous vehicles. A critical threshold seems to have been crossed, allowing programmers to use clever algorithms and massive amounts of cheap processing power to wring a semblance of intelligence from circuitry.

Evidence of this is all around. Until recently machines have found it difficult to “understand” written or spoken language, or to deal with complex visual images, but now they seem to be getting to grips with such things. Apple’s Siri responds accurately to many voice commands and can take dictation for e-mails and memos. Google’s translation program is lightning-fast and increasingly accurate, and the company’s computers are becoming better at understanding just what its cameras (as used, for example, to compile Google Maps) are looking at.

At the same time hardware, from processors to cameras to sensors, continues to get better, smaller and cheaper, opening up opportunities for drones, robots and wearable computers. And innovation is spilling into new areas: in finance, for example, crypto-currencies like Bitcoin hint at new payment technologies, and in education the development of new and more effective online offerings may upend the business of higher education.

This wave, like its predecessors, is likely to bring vast improvements in living standards and human welfare, but history suggests that society’s adjustment to it will be slow and difficult. At the turn of the 20th century writers conjured up visions of a dazzling technological future even as some large, rich economies were limping through a period of disappointing growth in output and productivity. Then, as now, economists hailed a new age of globalisation even as geopolitical tensions rose. Then, as now, political systems struggled to accommodate the demands of growing numbers of dissatisfied workers.

Some economists are offering radical thoughts on the job-destroying power of this new technological wave. Carl Benedikt Frey and Michael Osborne, of Oxford University, recently analysed over 700 different occupations to see how easily they could be computerised, and concluded that 47% of employment in America is at high risk of being automated away over the next decade or two. Messrs Brynjolfsson and McAfee ask whether human workers will be able to upgrade their skills fast enough to justify their continued employment. Other authors think that capitalism itself may be under threat.

The global eclipse of labour

This special report will argue that the digital revolution is opening up a great divide between a skilled and wealthy few and the rest of society. In the past new technologies have usually raised wages by boosting productivity, with the gains being split between skilled and less-skilled workers, and between owners of capital, workers and consumers. Now technology is empowering talented individuals as never before and opening up yawning gaps between the earnings of the skilled and the unskilled, capital-owners and labour. At the same time it is creating a large pool of underemployed labour that is depressing investment.

The effect of technological change on trade is also changing the basis of tried-and-true methods of economic development in poorer economies. More manufacturing work can be automated, and skilled design work accounts for a larger share of the value of trade, leading to what economists call “premature deindustrialisation” in developing countries. No longer can governments count on a growing industrial sector to absorb unskilled labour from rural areas. In both the rich and the emerging world, technology is creating opportunities for those previously held back by financial or geographical constraints, yet new work for those with modest skill levels is scarce compared with the bonanza created by earlier technological revolutions.

All this is sorely testing governments, beset by new demands for intervention, regulation and support. If they get their response right, they will be able to channel technological change in ways that broadly benefit society. If they get it wrong, they could be under attack from both angry underemployed workers and resentful rich taxpayers. That way lies a bitter and more confrontational politics.

No comments:

Post a Comment